What is a Robots.txt File? Everything You Need To Write, Submit, and Recrawl a Robots File for SEO

We’ve written a comprehensive article on how search engines find, crawl, and index your websites. A foundational step in that process is the robots.txt file, the gateway for a search engine to crawl your site. Understanding how to construct a robots.txt file properly is essential in search engine optimization (SEO).

This simple yet powerful tool helps webmasters control how search engines interact with their websites. Understanding and effectively utilizing a robots.txt file is essential for ensuring a website’s efficient indexing and optimal visibility in search engine results.

What is a Robots.txt File?

A robots.txt file is a text file located in the root directory of a website. Its primary purpose is to guide search engine crawlers about which parts of the site should or should not be crawled and indexed. The file uses the Robots Exclusion Protocol (REP), a standard websites use to communicate with web crawlers and other web robots.

The REP is not an official Internet standard but is widely accepted and supported by major search engines. The closest to an accepted standard is the documentation from major search engines like Google, Bing, and Yandex. For more information, visiting Google’s Robots.txt Specifications is recommended.

Why is Robots.txt Critical to SEO?

- Controlled Crawling: Robots.txt allows website owners to prevent search engines from accessing specific sections of their site. This is particularly useful for excluding duplicate content, private areas, or sections with sensitive information.

- Optimized Crawl Budget: Search engines allocate a crawl budget for each website, the number of pages a search engine bot will crawl on a site. By disallowing irrelevant or less important sections, robots.txt helps optimize this crawl budget, ensuring that more significant pages are crawled and indexed.

- Improved Website Loading Time: By preventing bots from accessing unimportant resources, robots.txt can reduce server load, potentially improving the site’s loading time, a critical factor in SEO.

- Preventing Indexing of Non-Public Pages: It helps keep non-public areas (like staging sites or development areas) from being indexed and appearing in search results.

Robots.txt Essential Commands and Their Uses

- Allow: This directive is used to specify which pages or sections of the site should be accessed by the crawlers. For instance, if a website has a particularly relevant section for SEO, the ‘Allow’ command can ensure it’s crawled.

Allow: /public/- Disallow: The opposite of ‘Allow’, this command instructs search engine bots not to crawl certain parts of the website. This is useful for pages with no SEO value, like login pages or script files.

Disallow: /private/- Wildcards: Wildcards are used for pattern matching. The asterisk (*) represents any sequence of characters, and the dollar sign ($) signifies the end of a URL. These are useful for specifying a wide range of URLs.

Disallow: /*.pdf$- Sitemaps: Including a sitemap location in robots.txt helps search engines find and crawl all the important pages on a site. This is crucial for SEO as it aids in the faster and more complete indexing of a site.

Sitemap: https://martech.zone/sitemap_index.xmlRobots.txt Additional Commands and Their Uses

- User-agent: Specify which crawler the rule applies to. ‘User-agent: *’ applies the rule to all crawlers. Example:

User-agent: Googlebot- Noindex: While not part of the standard robots.txt protocol, some search engines understand a Noindex directive in robots.txt as an instruction not to index the specified URL.

Noindex: /non-public-page/- Crawl-delay: This command asks crawlers to wait a specific amount of time between hits to your server, useful for sites with server load issues.

Crawl-delay: 10How To Test Your Robots.txt File

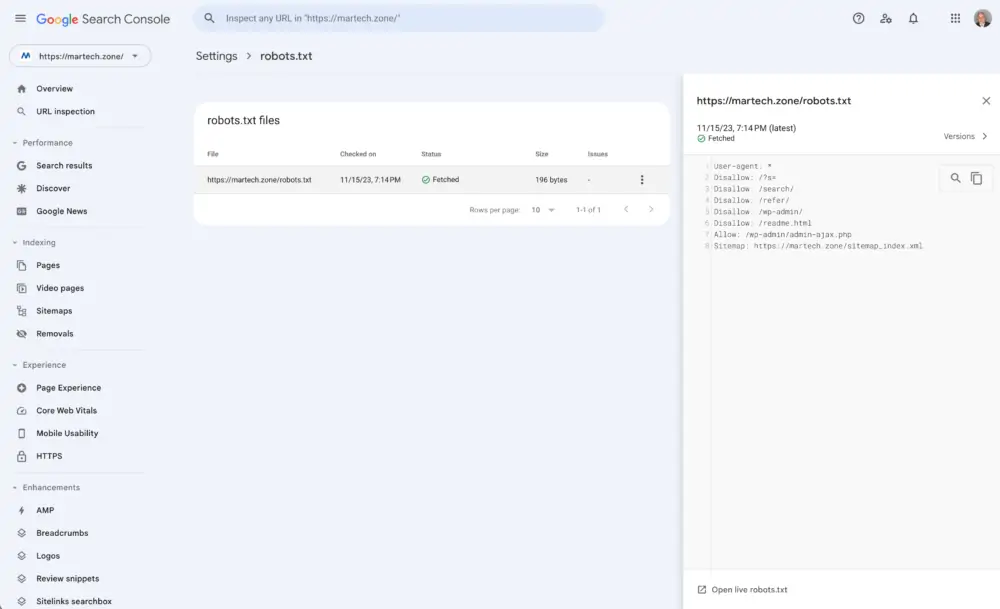

Though it’s buried in Google Search Console, search console does offer a robots.txt file tester.

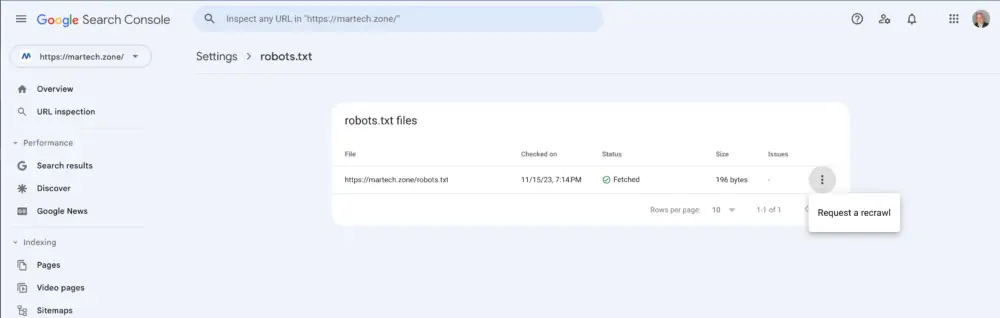

You can also resubmit your Robots.txt File by clicking on the three dots on the right and selecting Request a Recrawl.

Test or Resubmit Your Robots.txt File

Can The Robots.txt File Be Used To Control AI Bots?

The robots.txt file can be used to define whether AI bots, including web crawlers and other automated bots, can crawl or utilize the content on your site. The file guides these bots, indicating which parts of the website they are allowed or disallowed from accessing. The effectiveness of robots.txt controlling the behavior of AI bots depends on several factors:

- Adherence to the Protocol: Most reputable search engine crawlers and many other AI bots respect the rules set in

robots.txt. However, it’s important to note that the file is more of a request than an enforceable restriction. Bots can ignore these requests, especially those operated by less scrupulous entities. - Specificity of Instructions: You can specify different instructions for different bots. For instance, you might allow specific AI bots to crawl your site while disallowing others. This is done using the

User-agentdirective in therobots.txtfile example above. For example,User-agent: Googlebotwould specify instructions for Google’s crawler, whereasUser-agent: *would apply to all bots. - Limitations: While

robots.txtcan prevent bots from crawling specified content; it doesn’t hide the content from them if they already know the URL. Additionally, it does not provide any means to restrict the usage of the content once it has been crawled. If content protection or specific usage restrictions are required, other methods like password protection or more sophisticated access control mechanisms might be necessary. - Types of Bots: Not all AI bots are related to search engines. Various bots are used for different purposes (e.g., data aggregation, analytics, content scraping). The robots.txt file can also be used to manage access for these different types of bots, as long as they adhere to the REP.

The robots.txt file can be an effective tool for signaling your preferences regarding the crawling and utilization of site content by AI bots. However, its capabilities are limited to providing guidelines rather than enforcing strict access control, and its effectiveness depends on the compliance of the bots with the Robots Exclusion Protocol.

The robots.txt file is a small but mighty tool in the SEO arsenal. It can significantly influence a website’s visibility and search engine performance when used correctly. By controlling which parts of a site are crawled and indexed, webmasters can ensure that their most valuable content is highlighted, improving their SEO efforts and website performance.